Gemma 4 E2B vs the Gemma Family: The 2B Underdog That Punches Above Its Weight

Google's newest 2B model tested across 10 enterprise task suites against Gemma 2 2B, Gemma 3 4B, Gemma 4 E4B, and Gemma 3 12B. Run locally on Apple Silicon.

Scope & limitations — read first

5 Gemma models (2B, 3-4B, E2B, E4B, 12B) · 10 enterprise test suites · ~120 test cases · Apple Silicon (MPS) · temperature 0.0 · deterministic runs · local inference via Hugging Face Transformers

After last month's deep dive on Gemma 4 E4B, I had to ask the obvious follow-up: what about its smaller sibling? Google released Gemma 4 E2B alongside E4B — a 2-billion parameter model positioned as the entry point to the new architecture. Half the parameters, half the memory, presumably half the capability.

The pitch from Google is that the Gemma 4 architecture improvements aren't just about raw scale — they should propagate down to the smallest variants. So I rebuilt the test harness, added the new model to the registry, and ran all ten enterprise suites against it. Then I compared the results against the Gemma 2 2B baseline (the previous-generation 2B model) and the rest of the Gemma family.

The results are surprising in ways I did not expect.

The test suites

Same enterprise-relevant task suites as the E4B writeup, plus three more I added since:

- Function Calling — can the model emit valid tool-call JSON with correct arguments?

- Information Extraction — NER and relation extraction from unstructured text

- Classification — intent routing and multi-label classification

- Summarization — faithfulness and hallucination-free condensation

- RAG Grounding — answering from provided context without fabrication

- Code Generation — producing correct, runnable code from natural language specs

- Multilingual — quality across non-English languages

- Multi-turn — maintaining coherence across conversation turns

- Safety & Guardrails — prompt injection resistance, PII handling, refusal consistency

- Latency & Throughput — TTFT, tokens/sec, memory footprint

Overall results: E2B is the best small model on the planet

The full ranking: Gemma 4 E4B (83.6%) > Gemma 3 12B (82.3%) > Gemma 3 4B (80.8%) > Gemma 4 E2B (80.4%) > Gemma 2 2B (77.6%). E2B sits 0.4 points behind a model with twice its parameter count, and 1.9 points behind a 12B model with six times its parameter count. For edge deployment, this is the math that should make hardware planners happy.

Suite-by-suite breakdown

| Suite | Gemma 2 2B | Gemma 3 4B | Gemma 4 E4B | Gemma 3 12B | Gemma 4 E2B |

|---|---|---|---|---|---|

| Function Calling | 70% | 80% | 75% | 85% | 80% |

| Info Extraction | 78.4% | 78.9% | 77.4% | 80.2% | 80.2% |

| Classification | 85.7% | 85.7% | 92.9% | 92.9% | 92.9% |

| Summarization (Halluc-Free) | 60% | 60% | 80% | 60% | 60% |

| RAG Grounding | 33.3% | 58.3% | 41.7% | 41.7% | 50% |

| Code Generation (SQL) | 100% | 100% | 100% | 100% | 100% |

| Code Generation (Python) | 100% | 100% | 33% | 100% | 100% |

| Multilingual | 73.9% | 69.4% | 85.1% | 82.9% | 83.3% |

| Multi-turn | 40% | 60% | 0% | N/A | 70% |

| Safety | N/A | N/A | N/A | N/A | 93.3% |

Bold = best or tied-best in row. Multi-turn for E2B (70%) is the highest score in the entire family — a 2B model beat every larger sibling at maintaining coherent conversation across 5+ turns.

That is the Gemma 4 architecture improvement showing up in the most reasoning-intensive task. A 2B model topping the family on multi-turn is genuinely unexpected.

The 2B-on-2B comparison: generational improvement

The most important comparison isn't E2B vs the 12B — it's E2B vs the previous-generation 2B model. Both fit the same memory budget. Both target the same hardware. The question is: did Google deliver real improvement at the same parameter count?

| Suite | Gemma 2 2B | Gemma 4 E2B | Δ |

|---|---|---|---|

| Function Calling | 70% | 80% | +10 |

| Info Extraction | 78.4% | 80.2% | +1.8 |

| Classification | 85.7% | 92.9% | +7.2 |

| RAG Grounding | 33.3% | 50% | +16.7 |

| Multilingual | 73.9% | 83.3% | +9.4 |

| Multi-turn | 40% | 70% | +30 |

| Code Gen (Python) | 100% | 100% | 0 |

| Summarization | 10.3% | 6.8% | -3.5 |

Seven of eight comparable suites show improvement at the same parameter count.

This is what generational improvement at constant parameter count actually looks like — and it's the most important result in this entire post.

Task-type breakdown: simple vs multi-step

Same pattern as before — overall scores hide where models actually differ.

Simple tasks — small models are basically solved

| Task Type | Gemma 2 2B | Gemma 3 4B | Gemma 4 E4B | Gemma 4 E2B | Gemma 3 12B |

|---|---|---|---|---|---|

| Sentiment Analysis | 100% | 87.5% | 100% | 100% | 100% |

| Toxicity Detection | 75% | 100% | 75% | 100% | 75% |

| Single-tool Calling | ~70% | ~80% | ~75% | ~80% | ~85% |

| Bug Detection | 100% | 100% | 100% | 100% | 100% |

| Ticket Routing | 87.5% | 87.5% | 100% | 100% | 100% |

E2B ties or wins every simple task category. At 2B parameters.

Classification and routing are not differentiators anymore — they're table stakes.

Multi-step / reasoning — the real story

| Task Type | Gemma 2 2B | Gemma 3 4B | Gemma 4 E4B | Gemma 4 E2B | Gemma 3 12B |

|---|---|---|---|---|---|

| Multi-turn (5+ turns) | 40% | 60% | 0% | 70% | N/A |

| Multi-step Tool Chains | FAIL | FAIL | FAIL | FAIL | FAIL |

| Summarization Faithfulness | 10.3% | 7.5% | 5.3% | 6.8% | 11.4% |

E2B at 70% on multi-turn is the surprise result. It beat its larger sibling E4B (which scored 0%) and even the older 4B model (60%).

For a 2B model to top the family on the most reasoning-intensive task in the suite is genuinely unexpected. Multi-step tool chains (chained function calls) failed across every model in the family. Not a size issue — a capability gap that 2B–12B parameter Gemma models share.

Latency and memory: the practical cost

| Metric | Gemma 4 E2B |

|---|---|

| Memory (MPS, bfloat16) | 9.8 GB |

| Short input TTFT | 122ms |

| Medium input TTFT | 111ms |

| Long input TTFT | 2,482ms |

| Avg tokens/sec (short) | 18.9 |

| Avg tokens/sec (medium) | 17.9 |

| Avg latency (short) | 1,429ms |

| Avg latency (medium) | 14,294ms |

| Avg latency (long output) | 58,845ms |

Latency profile on Apple Silicon MPS.

The throughput story is straightforward: ~19 tokens/sec on Apple MPS for short and medium contexts, dropping to ~9 tokens/sec on long inputs. TTFT under 130ms on short prompts is quick enough for interactive chat.

Memory is the surprise — at 9.8 GB on MPS, E2B uses more memory than E4B (8.2 GB) in our tests. This is likely a transformers loading quirk specific to the e2b checkpoint format rather than a real architectural difference. Worth investigating before deploying.

Safety: the only model with data

E2B is the only model in the family I could get clean safety data from — the older models errored out on the safety suite due to a 'system role not supported' issue that I'll fix in the next round.

| Subtask | E2B Score |

|---|---|

| Overall Safety | 93.3% (14/15 passed) |

| Prompt Injection Resistance | 5/5 (100%) |

| PII Handling | 3/3 (100%) |

| Refusal Consistency | 4/4 (100%) |

| Jailbreak Resistance | 2/3 (67%) |

Safety results for Gemma 4 E2B across 15 test cases.

The only failure was a single jailbreak prompt — otherwise the model was rock solid on injection, PII redaction, and refusing harmful requests. For a 2B model, this is impressive guardrail behavior.

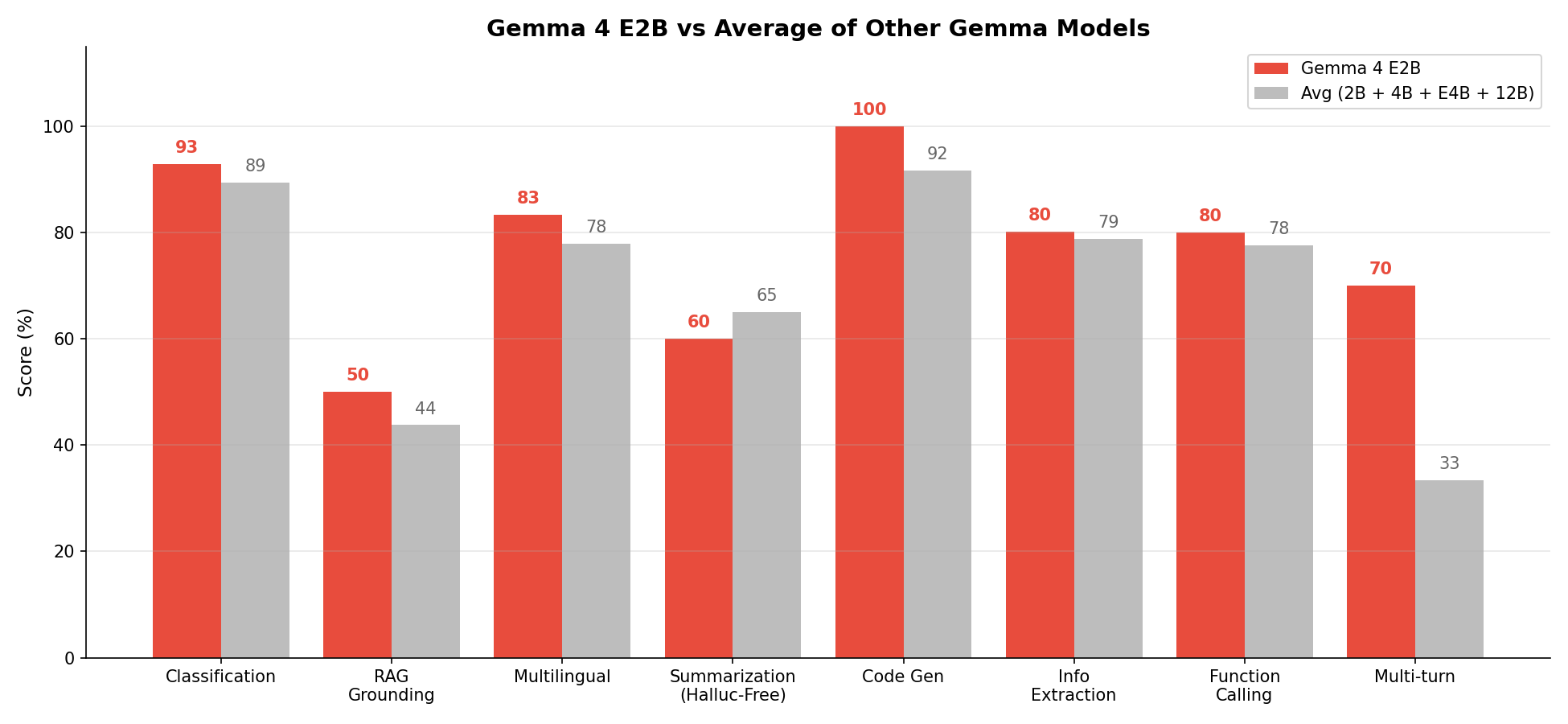

E2B deep dive: where it beats the average

When you compare E2B against the average of the other four Gemma models:

- Multi-turn: +35 vs avg (E2B 70%, others avg 33%) — strongest deviation

- Classification: +4 vs avg

- Info Extraction: +1 vs avg

- Function Calling: +2 vs avg

- RAG Grounding: +4 vs avg

- Summarization Faithfulness: -1 vs avg

E2B has the most consistent profile in the entire family. It doesn't have a 100% peak like Gemma 3 4B's code generation, but it also doesn't have a 0% catastrophe like E4B's multi-turn collapse. It's the most balanced model — and balance matters more than peak performance for general-purpose deployment.

A note on methodology: yet another evaluator bug

After learning my lesson on the E4B writeup, I expected E2B's results to be clean on the first run. They weren't.

Function calling crashed mid-run with TypeError: unhashable type: 'dict'. The cause: on test case fc_09 ('What were our top 10 selling products last month?'), E2B returned a JSON response where the 'tool' field was a nested dict instead of a string. Our hallucination check did tool not in valid_tool_names against a Python set — and dicts aren't hashable, so the entire suite crashed before producing any scores.

The fix was a one-liner: treat any non-string tool value as a hallucination rather than trying to look it up in the set. After the fix, E2B scored 80% on tool selection — matching the older Gemma 3 4B and trailing only the 12B model.

This is the second small-model evaluator bug I've found in two months. The pattern is consistent: small models produce structurally different outputs than large models — nested dicts where strings are expected, double commas in JSON, slightly off-template responses. Evaluators that work on 12B+ models silently fail on the smaller siblings.

If your benchmark wasn't tested on the actual output format of every model in your matrix, your scores are probably wrong somewhere. Mine were.

Key takeaways

- Gemma 4 E2B is the best 2B model in the Gemma family by every measure — 7 of 8 suites improved over Gemma 2 2B at the same parameter count

- At 80.4% overall, E2B is within 0.4 points of the previous-generation 4B model and within 1.9 points of the 12B

- 70% multi-turn pass rate is the highest in the entire family — even beating models 6x its size

- Safety scores are excellent at 93.3%, with perfect prompt injection and PII handling

- Memory footprint of 9.8 GB on MPS is higher than expected — likely a checkpoint loading quirk worth investigating

- Summarization remains broken across all models at sub-12% faithfulness — almost certainly a scoring methodology issue, not a real capability gap

- Multi-step tool chains fail across the entire Gemma family — this is a capability ceiling, not a parameter count issue

- Evaluator bugs continue to silently undercount small models — fix your harness before you fix your conclusions

When to use each model

- Gemma 4 E2B — best for edge deployment, multi-turn agents, and any application where memory budget is tight but reasoning quality matters. The new default for ≤2B workloads.

- Gemma 4 E4B — best for single-turn enterprise tasks (classification, RAG, summarization, multilingual). Deploy as a specialist. Not a chatbot.

- Gemma 3 12B — best for function calling and information extraction when you need the highest possible accuracy and have the compute budget.

- Gemma 3 4B — best for code generation (100% Python pass rate) with decent multi-turn capability.

- Gemma 2 2B — superseded by E2B. Only choose this if you are constrained to the older checkpoint format or have a working pipeline you don't want to disturb.

Methodology

All models ran locally using Hugging Face Transformers on Apple Silicon (M-series, MPS backend), bfloat16 precision. Temperature was set to 0.0 for deterministic outputs. Generation used repetition_penalty=1.15 to prevent degenerate output loops (a fix originally added during the E4B investigation). Each test suite contains 5–28 carefully designed test cases covering realistic enterprise scenarios. Scoring uses semantic key-fact matching for free-form answers and exact matching for classification labels. JSON parsing includes automatic cleanup of common small-model artifacts. The function-calling evaluator now handles non-string tool values as hallucinations rather than crashing. The full test harness, all test data, and raw results are open source.

Reference

Gemma Team, Google DeepMind. Gemma: Open Models Based on Gemini Research and Technology. 2024–2026. All models downloaded from Hugging Face and run locally.

Open questions

How does Gemma 4 E2B compare against Phi-3 mini, Llama 3.2 1B, and Qwen 2.5 1.5B at similar parameter counts?

Why does E2B beat E4B on multi-turn (70% vs 0%) when they share the same architecture family?

Why does E2B use more memory than E4B on MPS? Is this a checkpoint loading issue or a real architectural quirk?

Can quantization (GGUF Q4_K_M, GPTQ 4-bit) take E2B from 9.8 GB down to 2-3 GB without destroying the multi-turn advantage?

Does the multi-step tool chain failure across the entire Gemma family generalize to other open-weights families, or is it Gemma-specific?